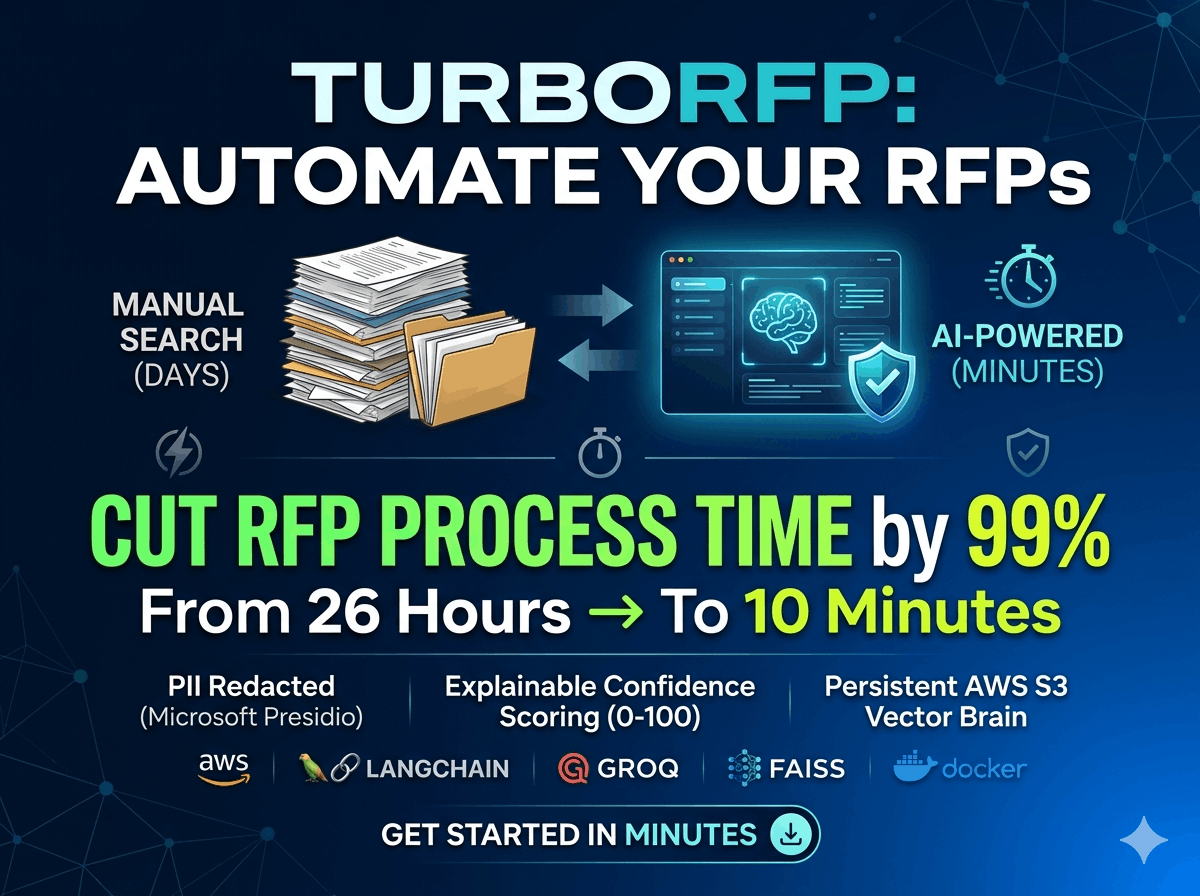

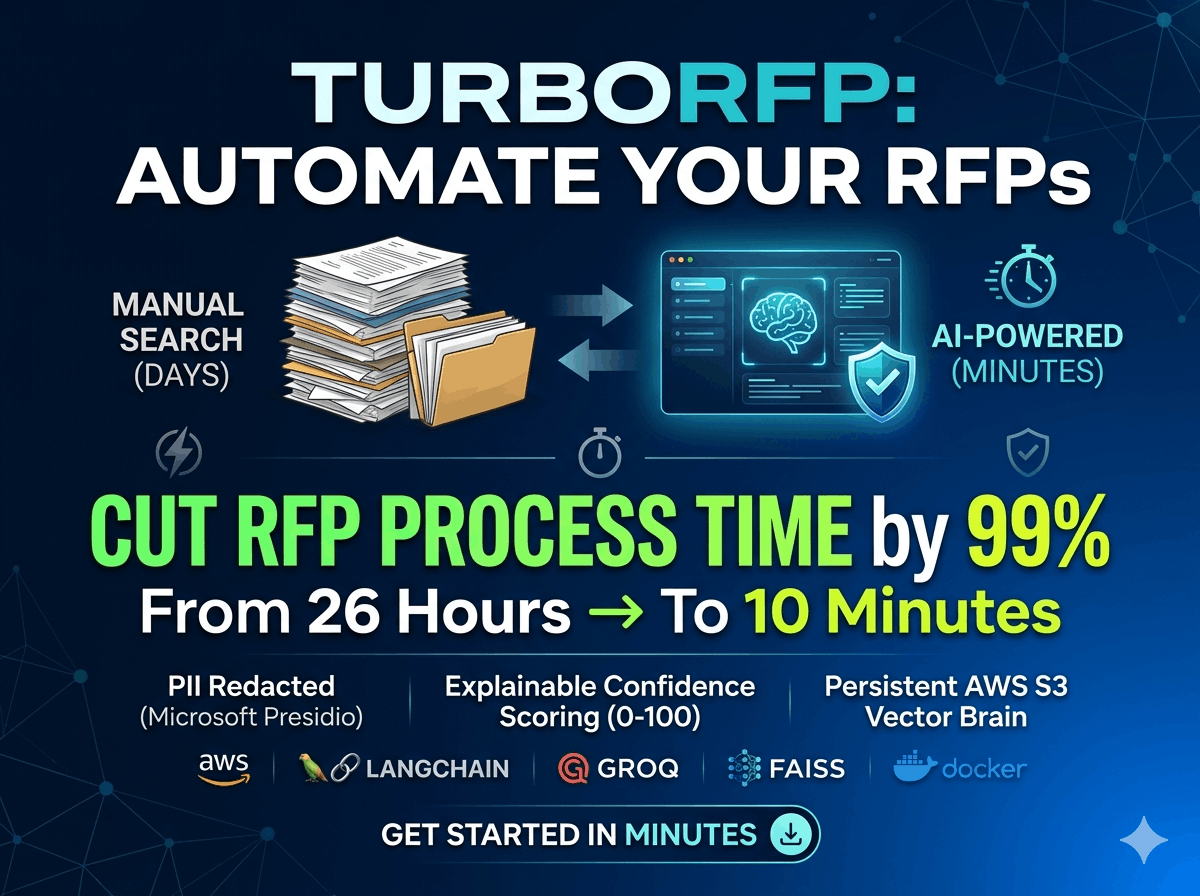

TurboRFP: Enterprise RFP Questionnaire Automator

End to End RAG Pipeline designed to automate the extraction & answering process

Gallery

About

GitHub Stats (at time of listing): 1 stars 0 forks 0 open issues Last commit: February 24, 2026🕵️ TurboRFP: Enterprise RFP & Security Questionnaire Automator (A Business To Business Problem Solver)Live Demo of the Project:http://13.53.125.103/Running Project on Treminal Video:https://youtu.be/srC5kR8GF-0?si=w9Dq9bfZb0DSY9mn📌 The Problem: The "40-Hour" Compliance BottleneckEnterprise sales teams face a massive hurdle: Security RFPs. Companies must answer hundreds of complex technical questions (e.g., "How is data encrypted at rest in your DB?") by manually searching through 100+ page security policies, AWS/Azure whitepapers, and SOC2 reports.Manual Effort: Takes 3–5 days per RFP.Risk: High chance of human error/hallucination in answers.Scalability: Impossible to handle 10+ RFPs simultaneously without a massive team.🚀 The Solution: TurboRFPTurboRFP is an End-to-End RAG (Retrieval-Augmented Generation) Pipeline designed to automate the extraction and answering process. It transforms unstructured PDF policies into a searchable vector brain, providing grounded, high-confidence answers to CSV-based questionnaires in minutes.Automate your Security Questionnaires and RFPs with the power of Generative AI.NexusAI is an advanced RAG (Retrieval-Augmented Generation) application designed to drastically reduce the time spent on answering Request for Information (RFI) and Request for Proposal (RFP) documents. By leveraging your internal knowledge base, it provides accurate, context-aware, and secure responses.🏗️ ArchitectureThe system follows a modern RAG architecture, utilizing a hybrid cloud approach for scalability and security.🔄 The S3-to-EC2 Data LifecycleThe system ensures that the "Knowledge Brain" (FAISS Index) is persistent, scalable, and decoupled from the compute instance.Ingestion & Embedding: When a document is uploaded, the RAG engine chunks the data and generates high-dimensional embeddings using the Gemini Embedding model.Local Indexing: A temporary FAISS index is built in the EC2 instance's RAM and saved to the local disk. S3 Synchronization (s3_vectorDB.py): * Upload: Upon completion of indexing, the system triggers an automated sync, pushing the FAISS .index and metadata files to an Amazon S3 Bucket. Download: On service startup (systemd), the instance checks S3 for an existing index. If found, it pulls the latest vector state to the local environment, ensuring zero-knowledge loss during redeployments.Query Execution: User queries are embedded and compared against the local FAISS index for sub-second retrieval, minimizing S3 latency during the inference phase.🛠 Deployment SpecificationsCompute: AWS EC2 (t3.medium recommended) running Ubuntu 24.04.Process Management: Managed via systemd with a custom service unit for 99.9% uptime.Object Storage: Amazon S3 for persistent vector store and document backups.Infrastructure-as-Code: Bash-automated deployment via run_ec2.sh for one-click environment setup.Updates Required For Deployment over AWSThe Google Gemini Embedding Models have a limited usages quota only which do not satisfy the project Requirements. So I decide to change the Embedding model to BERT open source Model. CHANGES: Remove: from langchain_google_genai import GoogleGenerativeAIEmbeddings Add: from langchain_huggingface import HuggingFaceEmbeddings model_name = "sentence-transformers/all-MiniLM-L6-v2" Force the model to run on the CPU (AWS Free Tier has no GPU) model_kwargs = {'device': 'cpu'} encode_kwargs = {'normalize_embeddings': True} return HuggingFaceEmbeddings( model_name=model_name, model_kwargs=model_kwargs, encode_kwargs=encode_kwargs )Remove: The time.sleep() from the llm_response file, as we do not require this anymore.Remove: Comment Out the body of "analyze_pii_data" function in the Securities.py file. As I am using the AWS free tier account, I do not have enough resource to install the required library so comment out the imports: from presidio_analyzer import AnalyzerEngine from presidio_anonymizer import AnonymizerEngineRemove: You can remove the unnecessary library from the requirements.txtNOTE:I am very beginner to AWS so I take help of Gemini To deploy my Website On AWS. Also I use AI for the better UI/UX design as my main goal and efforts were to make the system/pipeline run without any error and solve a real world problem.🛠️ Tech StackThis project is built using a robust selection of modern AI and web technologies:ComponentTechnologyDescriptionFrontendInteractive web interface for easy file uploads and result visualization.LLM InferenceUltra-fast inference using open-source models (openai/gpt-oss-120b) via Groq API.EmbeddingsHigh-performance embedding generation with models/gemini-embedding-001.OrchestrationManages the prompt engineering, retrieval chains, and LLM auditing.Vector DBEfficient similarity search for retrieving relevant documentation.Cloud StoragePersistent storage for the Vector Database to allow serverless-like scaling.SecurityAutomated detection and redaction of PII (Personally Identifiable Information), Prompt Injection, LLM Auditing via confidence scoreComputeHosted on scalable AWS EC2 instances for reliable availability.✨ Key Features📄 Document Parsing: Robustly handles PDF knowledge bases and Excel/CSV questionnaires.🔍 Smart Retrieval: Uses FAISS and Google Gemini embeddings to find the exact policy or technical spec needed.🛡️ PII Protection: Integrated PII analyzer prevents sensitive data leakage in prompts using Microsoft Presidio.⚡ Blazing Fast Generation: Powered by Groq's LPU inference engine for near-instant answers.☁️ Cloud Persistent State: Automatically syncs vector indexes to AWS S3, so knowledge is never lost between restarts.💉 Prompt Injection Defense: Basic heuristics to detect and block malicious prompt injection attempts in incoming questions.📊 Confidence Scoring: Every answer comes with a confidence score and reasoning, ensuring trutworthiness.Data Security and IntegrityPrompt Injection: Implement the method that check for promot injection in every question of the RFP csv file before sending to the LLM.Personally Identifiable Information(PII): Implement the method that preserve/hide the personal data of the employee and company like email, address, IP address, name etc using a python library named Microsoft Presidio.Confidence Score: While generating the answer of the RFP questions, the LLM will also generate the confidence score ranging from 0 to 100 and a reason for generating that particular answer by giving the exact location of the context in policies.📩 ContactI am currently looking for roles in AI Engineering and Data Science. If you are looking for a developer who understands the intersection of AI Research and Production Stability, let's connect.Author: [Mohit Mundria]Email: [[email protected]]LinkedIn: [www.linkedin.com/in/mohit-mundria-31631a322]

Comments (0)

No comments yet. Be the first to comment!

Related Products

ZapDM - Automate your Instagram DMs with AI-powered responses that feel personal and authentic

ZapDM is a Meta-approved Instagram DM automation platform that turns comments in

Outpacer.ai

Write daily. Rank higher.

TurboRFP: Enterprise RFP Questionnaire Automator

End to End RAG Pipeline designed to automate the extraction & answering process

MailDeck | Scalable Cold Email Infrastructure

The enterprise-grade inbox provider for cold email teams sending 100k+ a month

Sentra-AI

AI-powered reputation manager for agencies to automate client review growth.

AppReviewTool

AI-powered App Store review analyzer with $0 monthly operating cost.